Every day, new technologies are being developed that will make life easier, more sophisticated, and better for everyone. Today, technological development is occurring at a nearly exponential rate. New technology assists businesses in lowering costs, improving customer experiences, and boosting profitability.

The rapid development of artificial intelligence (AI) and machine learning is only one instance of how technology is changing daily. These technologies are being utilized to increase productivity and decision-making across a range of industries, including healthcare, banking, and retail. The top 15 emerging technology trends for 2023 are discussed in this article and will amaze you. Keep reading!

Let’s discuss some further examples of technology trends, such as the expansion of the Internet of Things (IoT), which is a developing network of networked devices capable of communication and data exchange. The development of new products and services that rely on this connection is made possible by the Internet of Things, which is changing how we interact with our environment. Technology is developing quickly, and it is expected that this trend will persist as new technologies are developed and put into use.

The following list of possible new technological trends that could emerge in 2023 as we grow closer to a future packed with cutting-edge technology:

Artificial intelligence and Machine Learning

Artificial intelligence (AI) is a way of developing computers that are intelligent and capable of performing tasks that are often only performed by people, such as learning, problem-solving, and making decisions.

A branch of AI known as machine learning (ML) uses statistical models and algorithms to help computers learn and get better at a given task without having to be explicitly programmed. Large datasets are used to train ML algorithms, which can then act or predict based on the patterns and trends found in the data.

A range of businesses are using machine learning (ML) and artificial intelligence (AI) to increase productivity and decision-making.

Internet of Things (IoT)

The term “Internet of Things” (IoT) describes an expanding network of devices that are linked to the Internet and can exchange data and communicate with one another. These connected devices can be anything from simple sensors to more complicated ones like household appliances, automobiles, and industrial machinery.

IoT devices provide data collection and transmission capabilities, as well as remote access and control capabilities. This makes it possible to develop new services and goods that rely on connectivity and data exchange.

IoT is transforming how we live, work, and interact with the world and paving the way for the development of new products and services that rely on connectivity and data sharing.

Augmented Reality (AR) and Virtual Reality (VR)

The technologies of augmented reality (AR) and virtual reality (VR) allow users to interact with computer-generated pictures and sounds in a real-world environment. Adoption of AR and VR in sectors including gaming, education, healthcare, and retail allows users to engage in new levels of immersion and interaction.

Healthcare practitioners can leverage AR and VR to simulate difficult operations and surgeries, and these technologies can improve education by offering immersive learning experiences. AR and VR can be employed in retail to offer clients customized shopping experiences and interactive product encounters.

These technologies will influence more and more sectors of the economy and aspects of society as they advance and become more widely available.

Blockchain

It is a digital ledger that allows for safe and transparent transactions without an intermediary. Blockchain technology will be used to build safe and transparent networks by businesses in sectors like finance, healthcare, and logistics. Moreover, businesses will be able to design new products and services using blockchain technology, such as non-fungible tokens and decentralized financing (DeFi) (NFTs).

In addition, blockchain is expected to become more scalable and energy-efficient, enabling wider adoption and use across numerous industries. Also, it is anticipated that there will be greater collaboration and creativity due to improved interoperability between various blockchain networks and platforms.

Cloud Computing

Cloud computing technology allows users to access computing resources like servers, storage, and applications online. Healthcare, finance, and logistics are just a few of the sectors that will employ cloud computing to cut costs, expand scalability, and boost productivity. Cloud computing will allow companies to create new services and products, such as cloud-based software and platform-as-a-service (PaaS) offerings.

Hybrid cloud solutions are one of the newest major developments in cloud computing which combine private and public cloud services to offer greater flexibility, scalability, and cost-effectiveness. Organizations can profit from both private and public clouds while also addressing their unique business demands and requirements with the help of hybrid cloud solutions.

Moreover, 2023 is anticipated to see the continued development of new cloud-native technologies, including serverless computing and containerization. With the help of these technologies, developers can create and deploy apps more rapidly and effectively without having to worry about complicated infrastructure administration.

Quantum Computing

Quantum computing processes data using quantum-mechanical principles. In contrast to quantum computers, which employ qubits, traditional computing uses bits. They do some tasks considerably more quickly than traditional computers.

Fields like materials science, drug development, and financial modeling could all be significantly changed by quantum computers. It is still in the early stages of development and has a number of technological obstacles to overcome before it can be widely used.

Many businesses are working to create quantum computing technology, including Splunk, Honeywell, Microsoft, Amazon, Google, etc. By 2029, revenue from the quantum computing sector is projected to reach over $2.5 billion. To succeed in this subject, knowledge of quantum mechanics, linear algebra, probability, information theory, and machine learning is beneficial.

Datafication

Datafication is the process of gathering, arranging, and visualizing data so that it is simple to understand and use. This may entail generating graphs, charts, or other visuals. It facilitates decision-making based on data and sharing findings with others.

In the future, it will play a significant role in data analysis and decision-making since it enables firms to utilize their data more effectively. By 2023, datafication may entail the use of more complex visualization and analysis technologies that may produce presentations that are both interactive and sophisticated.

Cybersecurity

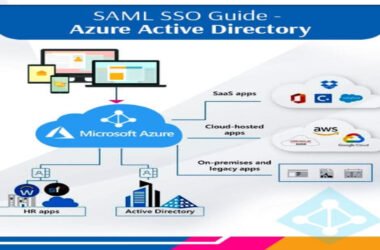

It encompasses the practices and technologies used to secure computer networks, devices, and systems from online dangers such as malware, hacking, and data breaches. Cybersecurity dangers are increasing and evolving as technology use expands. As more devices and systems become connected, the significance of cybersecurity is anticipated to increase.

The future of cybersecurity is expected to be shaped by a few factors. One trend is the rising use of cloud computing, which is the internet-based delivery of computing services. Although cloud computing has numerous advantages, like enhanced productivity and cost savings, it also presents new security risks, including the need to safeguard data that is processed and stored in the cloud.

Another trend is the expansion of the Internet of Things (IoT), which refers to the rise of internet-connected devices, including smart home appliances, medical equipment, and industrial machinery. As the IoT grows, new security issues are anticipated to arise since these devices may be more susceptible to online threats because of their low processing power and absence of built-in security features.

The growing application of artificial intelligence (AI) and machine learning in cybersecurity is a third trend. These technologies raise questions about the possibility of AI being used maliciously, even though they are being employed to increase the precision and effectiveness of cybersecurity systems.

Edge Computing

As the amount of data generated by connected devices continues to increase, Edge computing is becoming essential. It involves using computing resources and data processing capabilities at or near the “edge” of a network rather than at a central location like a data center. This is particularly useful when low latency (the time it takes to process and transmit data) is crucial or when sending data to a central location for processing is impractical or expensive.

The adoption and development of edge computing are expected to be influenced by a number of variables. They include the development of the Internet of Things (IoT), the rise in real-time data processing and decision-making requirements, and the requirement to manage substantial amounts of data produced by devices and sensors.

Zero Trust Security

The Zero Trust Security model is a cybersecurity approach that mandates authentication and authorization for all users and devices before allowing them access to a network. This model is gaining popularity in industries like finance, healthcare, and logistics, as it helps companies establish secure and transparent networks. With Zero Trust Security, companies can prevent potential threats like data breaches and cyber-attacks.

Conclusion

These technological trends are anticipated to have a substantial effect on a few businesses and daily life in 2023. But to use these technologies effectively, one must have a thorough awareness of both their strengths and weaknesses as well as an innovative mindset. Companies must be prepared to take calculated risks, engage in research and development, and adapt to evolving market conditions. Those who are successful in putting these new technologies into practice will be better positioned to flourish in a business environment that is changing quickly.